Prereqs: Linear algebra, and Math3ma’s and Jeremy Kun’s wonderful intros to tensors and tensor products

Intro

TENSORS ARE NOT ARRAYS OF NUMBERS! ok well…they kinda are. But that’s a wildly uninformative description, and it seriously confused me when I was first trying to understand tensors. The standard algebraic “universal property” introduction suffers from a different flavor of incomprehensibility: for a mortal like me, the barrage of equations and constructions defining the tensor product was hardly intuitive, and I walked away having no idea why these things were useful (caveat: these constructions are crucial to eventually understand, but nightmarish to begin with). I’m hoping to clarify tensors a bit in this article for past Michael, and for anyone else who likes their math well motivated and chock-full of intuition.

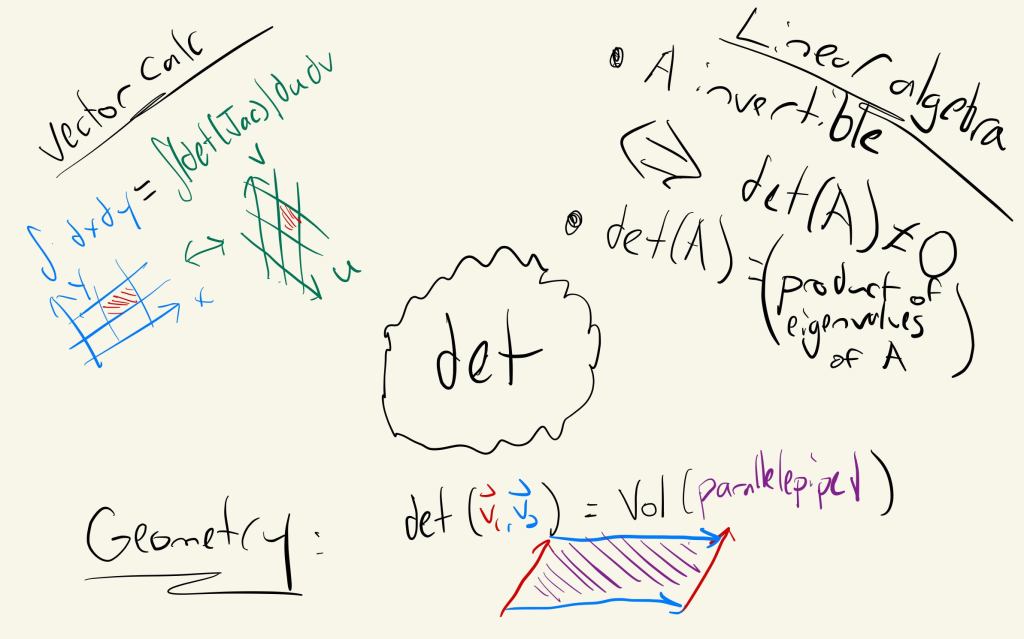

Determinants show up all over the place! And its uses are all intimately related (geometry does that)

As with many objects in mathematics, the best way to get a feel for what tensors capture is through a good example. We’re going to look at a very special tensor, one that you’ve been using ever since vector calc and linear algebra: the determinant. If you’re like me, you had no idea why this magic gadget with an arcane definition appears in so many deep ways, as in the multidimensional change of variables formula via determinants of Jacobians, or as an invertibility detector in linear algebra. The determinant is intimately related to one of the beating hearts of mathematics: measuring volumes.

Determinants: recall linear algebra

Think back to linear algebra.

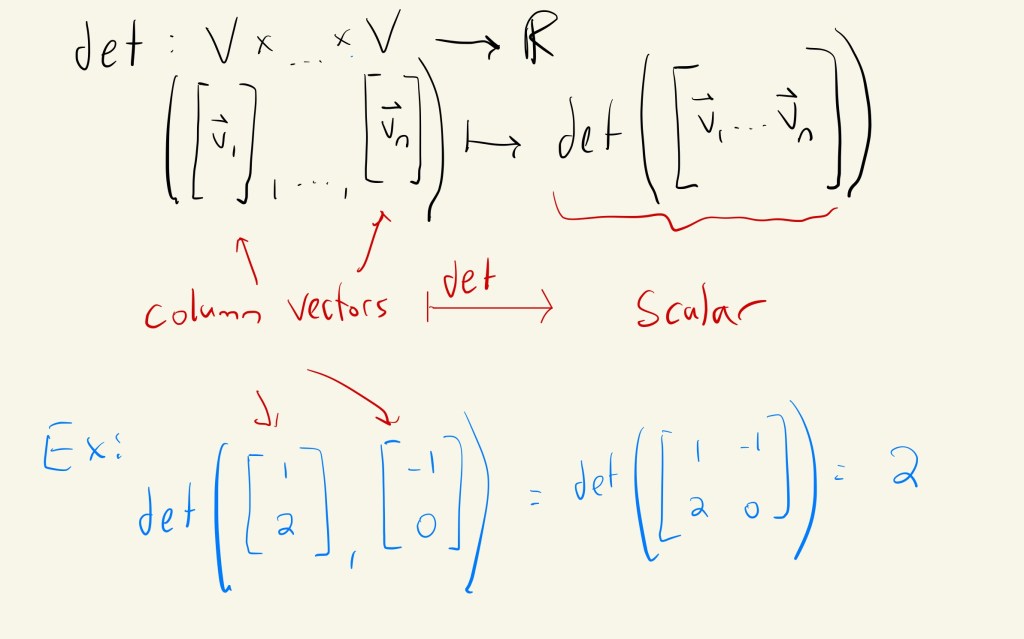

Linear algebra drilled into us that determinants are functions of matrices. They are–but as with anything in math, that’s only a small part of the whole picture. In this section, I want you to totally forget that idea and back up a bit. Let’s think about a determinant as a special kind of function: one that eats up vectors and spits out a number. First, how should this function work: just think of vectors as forming the (ordered) columns of a matrix, then compute the determinant of that matrix.

Think of the determinant as a special function of many vectors!

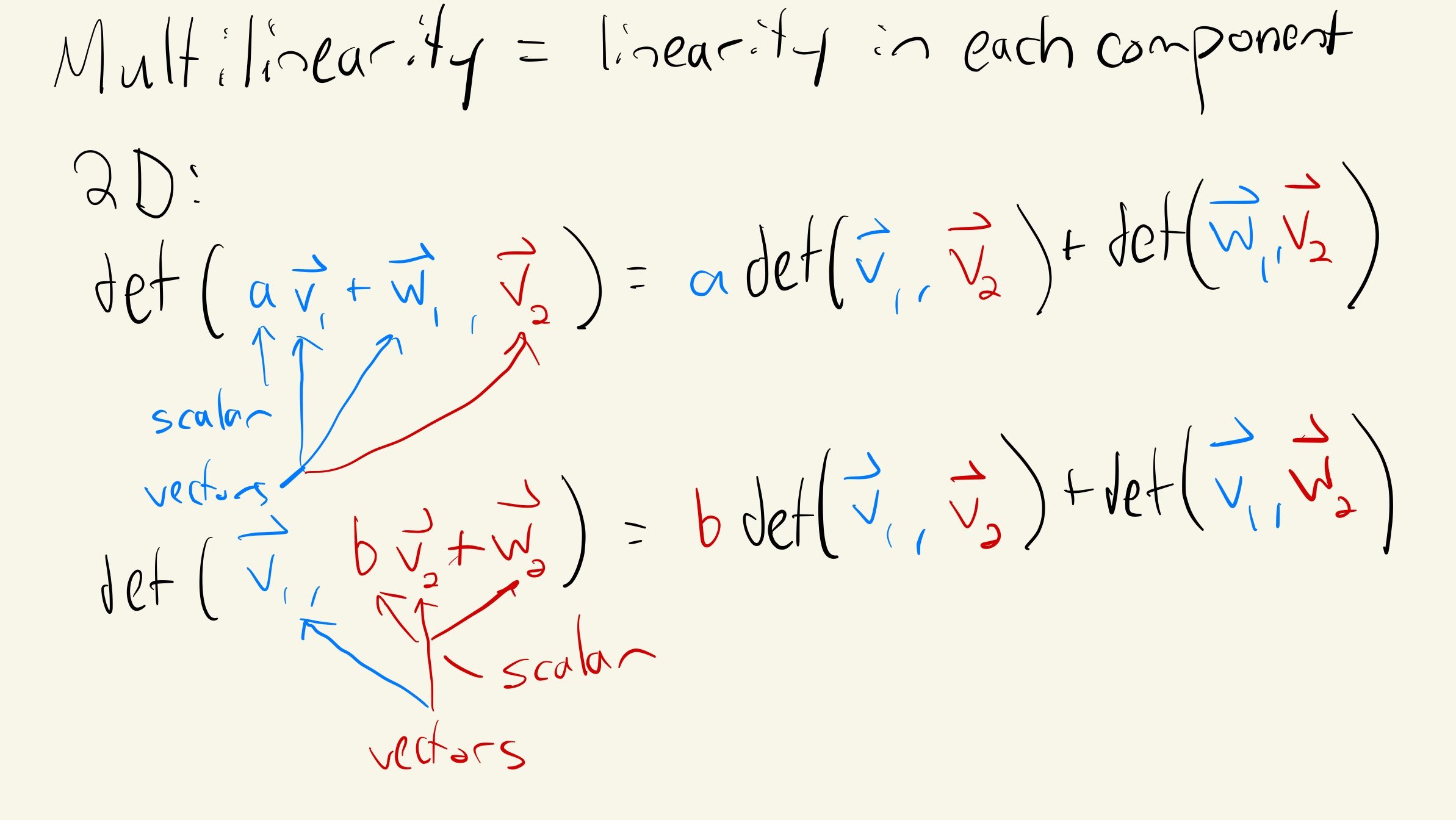

Linear algebra also taught us to really appreciate functions that respect the vector space structure: meaning, we like linear functions. But determinants should be linear for each column–algebraically, this means…

Multilinearity: each component is a linear function

So determinants are multilinear, not linear. This brings us to the definition of a tensor: a tensor is a multilinear function from a product of vector spaces over a field

,

(usually,

or

). Note that we often especially care about the case where we have many copies of the same vector space,

for all

. This is the case for the determinant, which formally is an alternating

-tensor on real vector spaces. Don’t worry, we’ll unpack the meaning of that last sentence. Eventually. After we see what the heck this algebra means, geometrically.

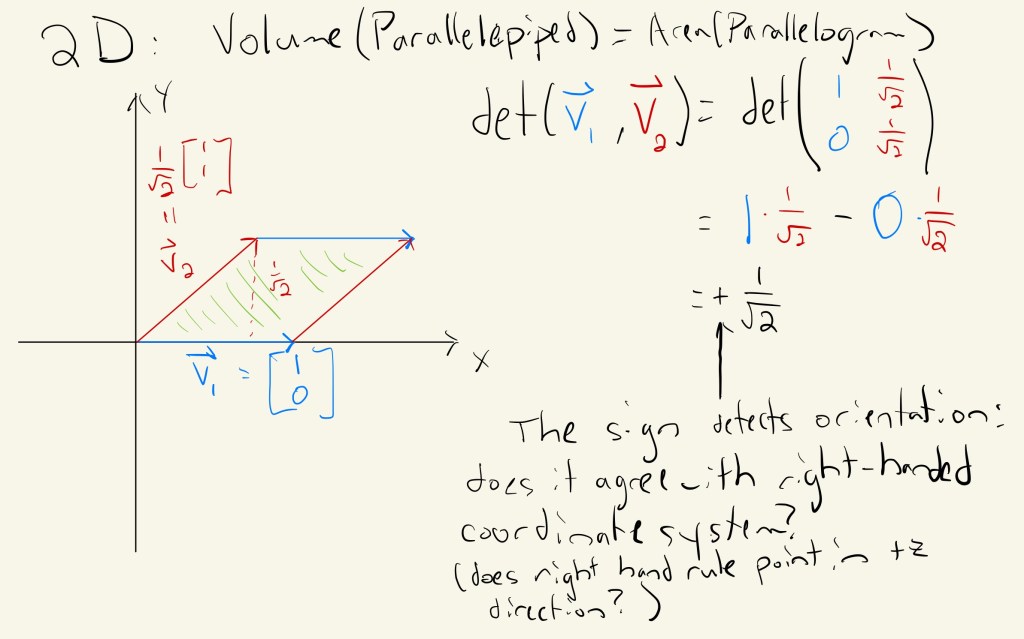

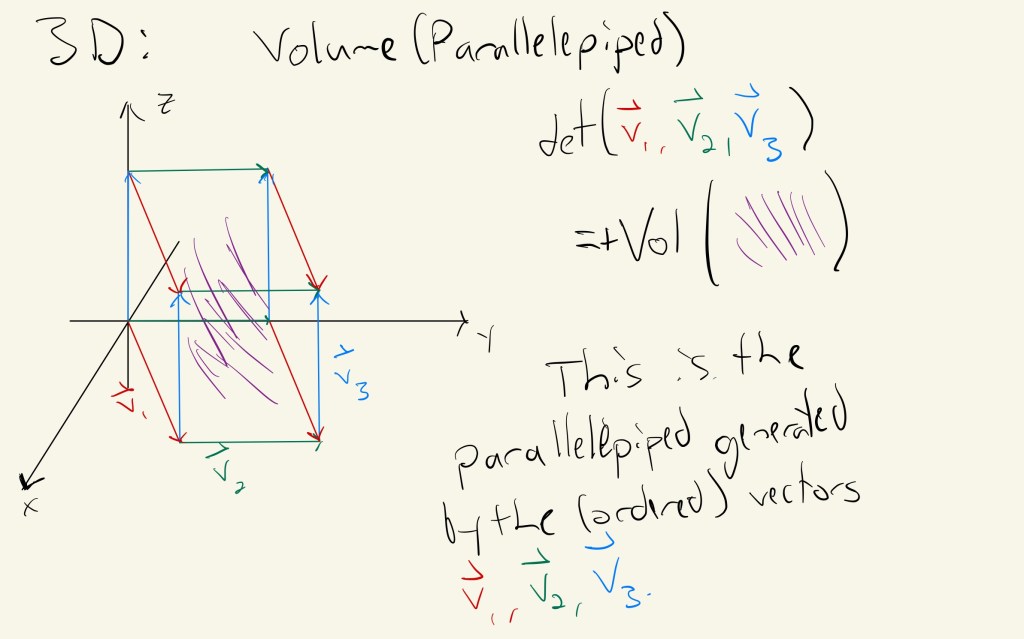

Determinants measure volume: a special tensor

In vector calculus, we learn an important fact: the determinant computes the (oriented) volume of the parallelepiped spanned by the columns (or rows) of a matrix. This really is a lot simpler than it sounds: look at the pictures!

In 2 dimensions, the determinant of two vectors returns the area of the parallelogram they define. The sign detects orientation–check the right hand rule!

Determinants compute this volume. Neat, right? The sign still detects orientation with respect to the right handed coordinate system–in fact, that’s one way of defining orientation of “simple” volumes!

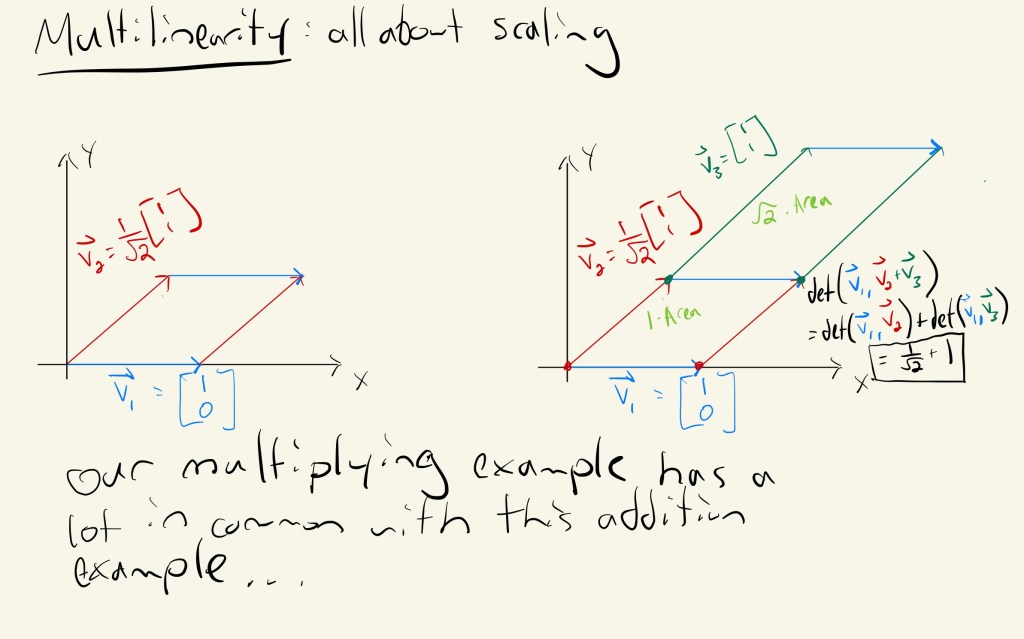

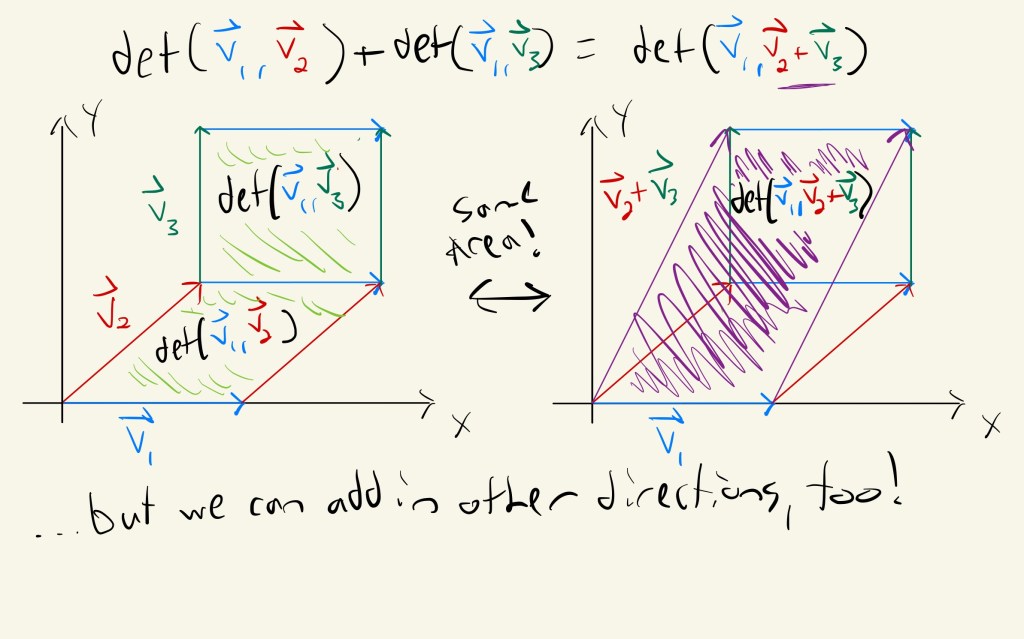

I didn’t spend any time deriving why the determinant computes this volume, mostly because there are plenty lovely explanations of this online already. But I want to pay attention to a key feature of the determinant (and of all tensors!): multilinearity. This algebraic feature is the core of any and all tensors: just as linearity is ubiquitous across mathematics, both pure and applied, multilinearity shows up a TON. Sometimes it has clear geometric or physical meaning, as in the cases of determinants, stress-energy tensors, or Riemannian metrics, but they are just as essential in fields with less concrete interpretations, e.g. representations of symmetry in quantum mechanics, advanced circuit theory, and machine learning (I mean come on…it’s called tensorflow, isn’t it?). Luckily for us, multilinearity in the case of the determinant is highly geometric: it reflects how volumes scale when we change side lengths.

Multilinearity = volume scaling! Linearity in each component describes how the volume scales as we adjust side lengths by scaling…

When we scalar multiply one component, that corresponds to scalar multiplying that vector and then forming a parallelepiped. The total volume scales linearly with each individual vector–this is what we meant when we said “tensors are linear in each component”.

Multiplication has some close connections to addition…

…but it works for general vector addition too! Ahhh…linearity and geometry. It’s a beautiful thing.

So for determinants, the algebraic properties of tensors capture how the volume of a parallelepiped responds to linear transformations of its side length vectors! This leads to a number of interesting questions with deep consequences. For example, how does the volume transform when we change a basis? Does it grow, shrink, or stay the same? For those of you who know a bit of relativity or differential geometry, this should set off some alarms for your prior exposure to tensors–as objects that transform covariantly or contravariantly (or a mix of the two!) in response to basis changes. Such basis changes may arise as we move along a manifold, or after we act by a symmetry group on our space (like a Lorentz transformation). Be warned–physicists and to a lesser degree mathematicians play fast and loose with the word “tensor”, and will regularly jump between multiple objects while calling them all tensors. Such is life in applied mathematics.

Bonus property for next time: alternating tensors and antisymmetry

Are you still thinking about the sign that detects orientation? Good! This property is not a general tensor property, but a special property enjoyed by the determinant (and wedge products of differential forms!). Don’t worry, we’ll talk about this fascinating feature next time.

Food: Pizza night! Fat toasted almonds, cilantro chimichurri, and spicy salami. Messy and quite tasty.

Leave a comment