Prereqs: this other post introducing the determinant as a tensor. And it wouldn’t hurt to know a bit about permutations and the symmetric group, but it’s not at all required

Last time…

…we saw that the determinant can be thought of a special kind of tensor: a multilinear function from a product of vector spaces

to their common base field

(often

or

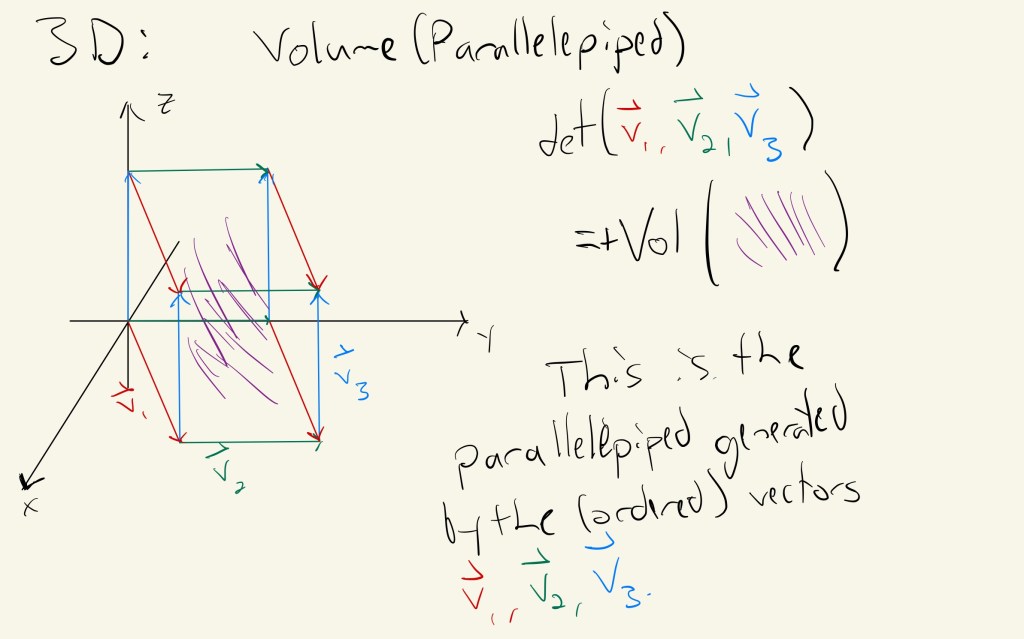

). More concretely, the determinant eats up vectors (in the form of columns of a matrix) and spits out a scalar which measures the oriented volume of the parallelepiped spanned by those vectors. It’s much easier to say in pictures:

The determinant computes the (oriented) volume as a function of side length vectors!

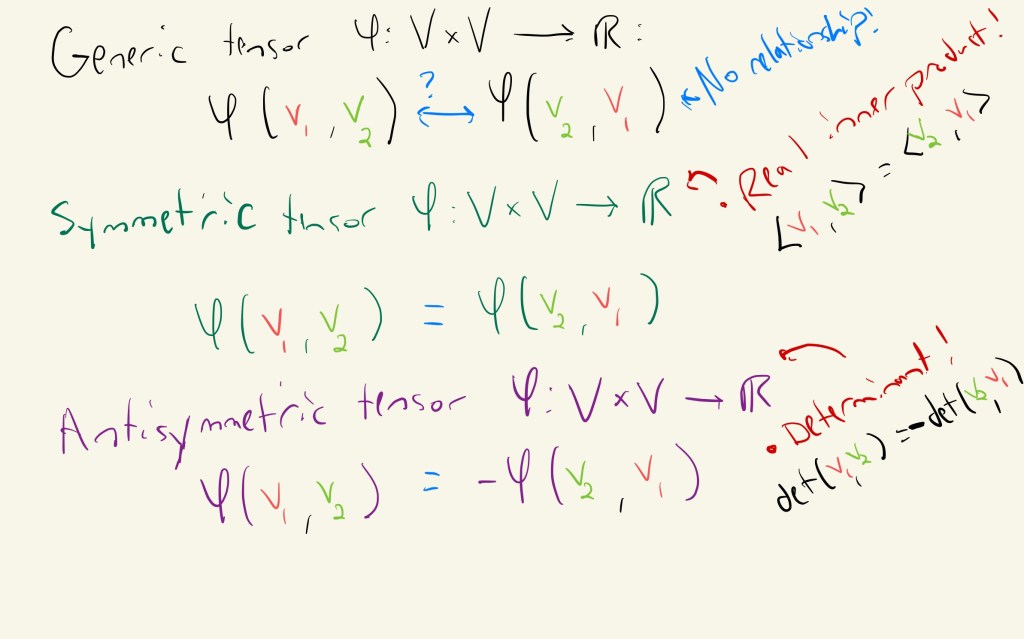

We talked about how multilinearity captures the scaling nature of the determinant: the total volume scales linearly as we vary one side length and leave the others fixed. Multilinearity is the signature property of all tensors. But the determinant has another property, one that a generic tensor doesn’t: antisymmetry. Antisymmetry, and its partner symmetry, are crucial properties to a world of fascinating tensors. Generic tensors don’t have any relationships between their input slots–if you swap two positions in the input, you don’t know anything about the output of the tensor. But symmetric and antisymmetric (also called alternating tensors) describe special cases where permuting the inputs results in a predictable output: for symmetric tensors, the output is unchanged, and for antisymmetric tensors, the output changes sign according to the permutation.

Swapping inputs in a generic tensor may produce wildly different outputs. Symmetric and antisymmetric tensors are special!

What does this really mean? To start, let’s think about inner products and the determinant, excellent examples of symmetric and antisymmetric tensors. The moral will be that for these special tensors, they are measuring some form of geometric quantity that remains invariant when we change our perspective. Swapping vector positions shouldn’t change the total volume or the angle between two vectors!

Symmetric tensors: an example through inner products

An inner product over

is a positive definite symmetric 2-tensor. Translation? (note: this definition only works for

and not

. Complex inner products are conjugate linear in one of the slots and thus not multilinear)

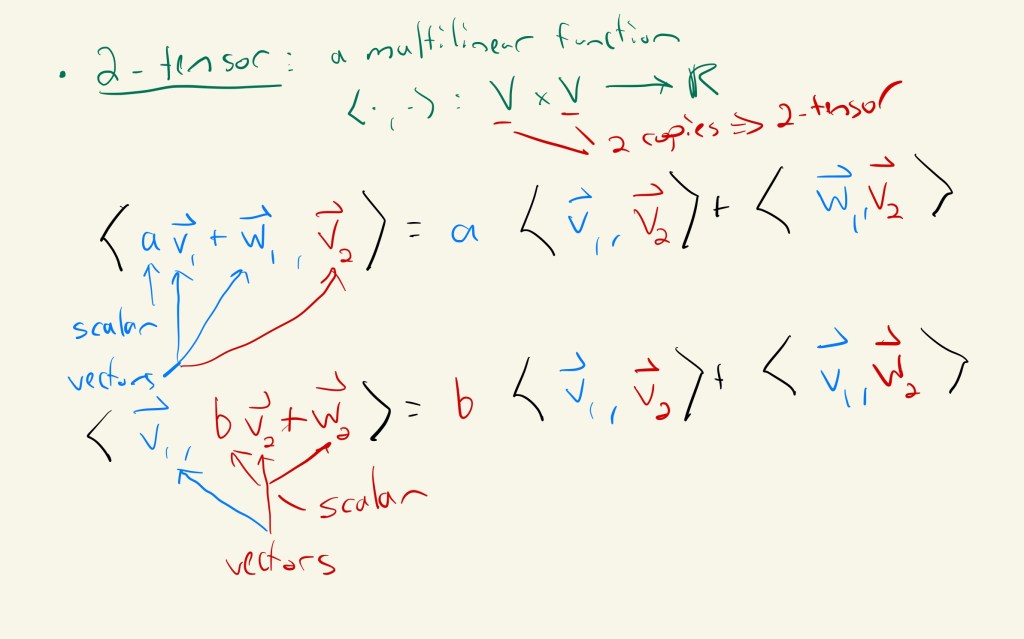

- 2-tensor: Tensors are multilinear functions, and the number indicates how many vectors the tensor eats up. So, a 2-tensor is a function with linearity in each component. 2-tensors arise so commonly that they have their own name–bilinear forms. A great deal of linear algebra is dedicated to classifying them, and their roles in almost every major area of mathematics and applied mathematics is irreplaceable–they allow us to measure error in machine learning, proper time in special relativity, and correlations in signal theory.

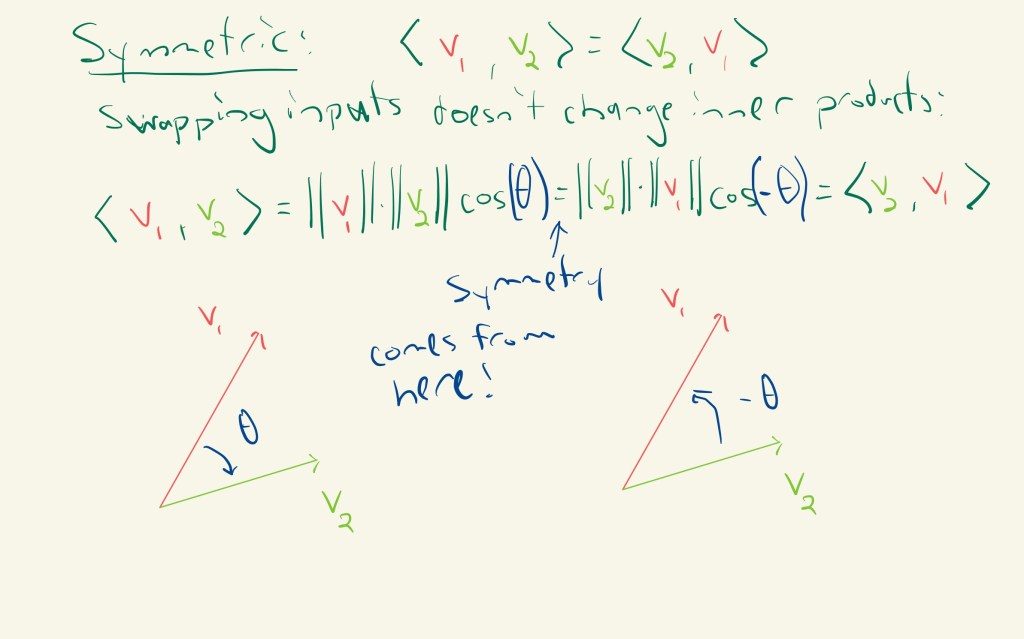

- Symmetric: This means that if we swap the two inputs, the output is unchanged. In other words, the inner product between two vectors shouldn’t depend on their order. In the case of the dot product…

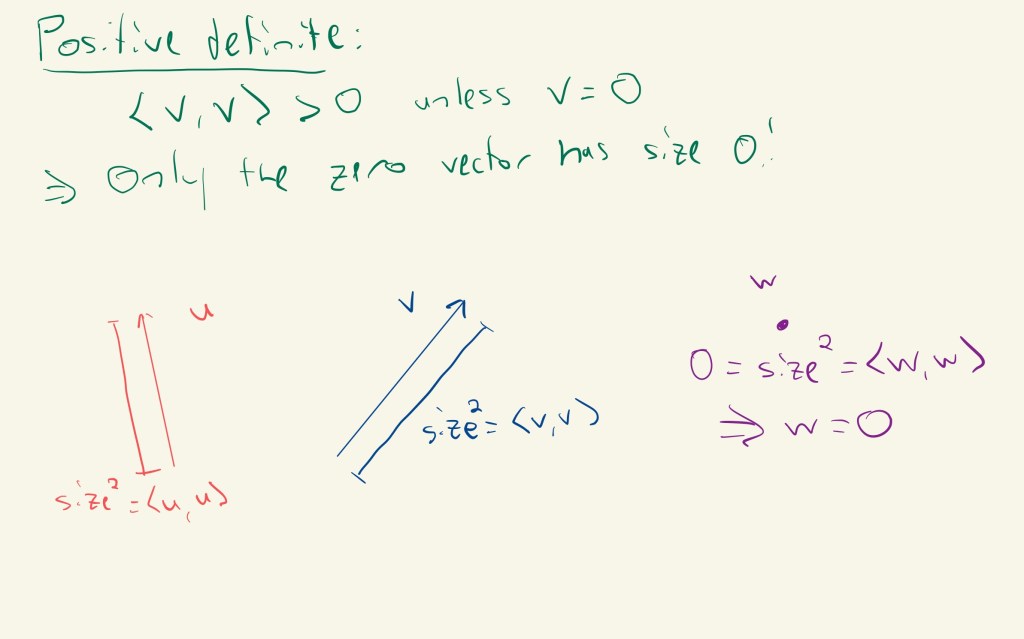

- Positive definite: Inner products give rise to norms by

, allowing us to measure the size of a vector

. Positive definite means that the size is always positive unless

.

See what symmetric did here? Saying that the inner product was symmetric gave us some geometric information: it told us that it didn’t matter the order in which we calculated our angle. Inner products measure “similarity”, and symmetric meant that is as similar to

as

is to

. In other words, the inner product is left invariant under swapping the input vectors.

Antisymmetric tensors: an example through determinants

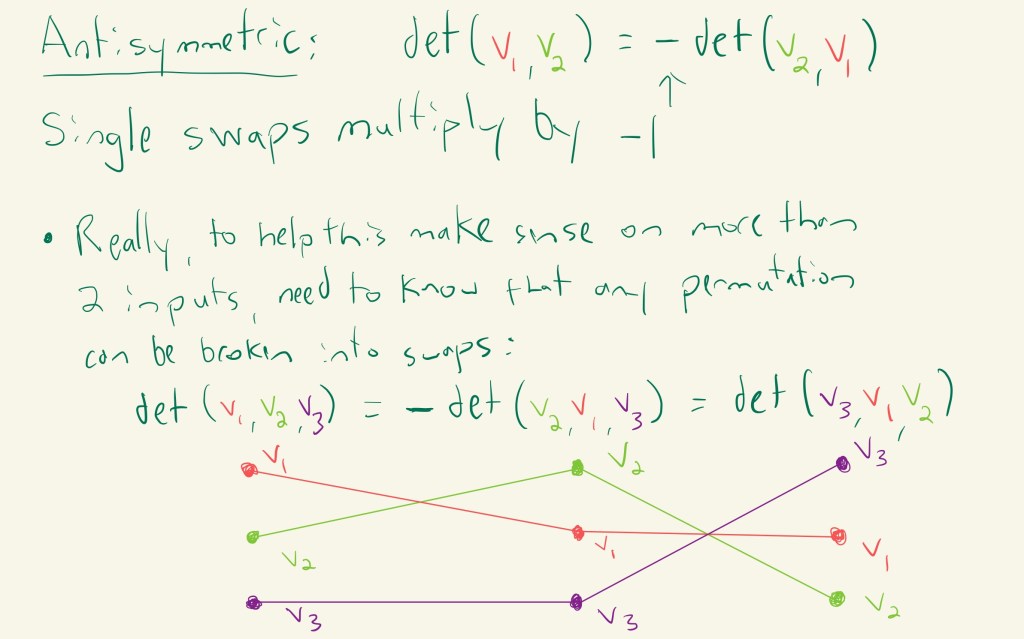

We spent a ton of time in the last post discussing why the determinant was tensorial: when we independently scaled our input vectors (thought of as the columns of a matrix), the determinant scaled linearly for each input vector. But we didn’t address the intriguing sign in front of the determinant. When we perform a single swap of our input vectors, the volume stays the same, but we multiply by . And since any permutation of inputs can be rewritten as a series of swaps, we can calculate the determinant for all permutations of given input vectors! If you’ve taken abstract algebra, this is captured by the statement that all elements in the symmetric group can be decomposed into products of 2-cycles, and the sign

in front of the determinant is determined by whether the product has an even (

) or odd (

) number of 2-cycles. This property is called antisymmetry, and these tensors are sometimes called alternating tensors.

The determinant is an alternating tensor: it has antisymmetry

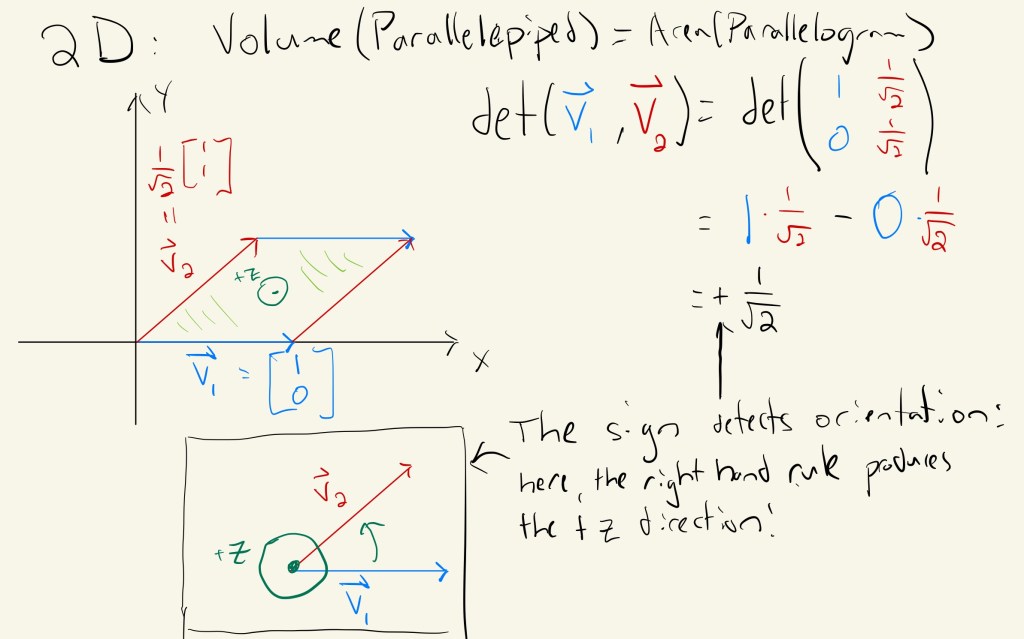

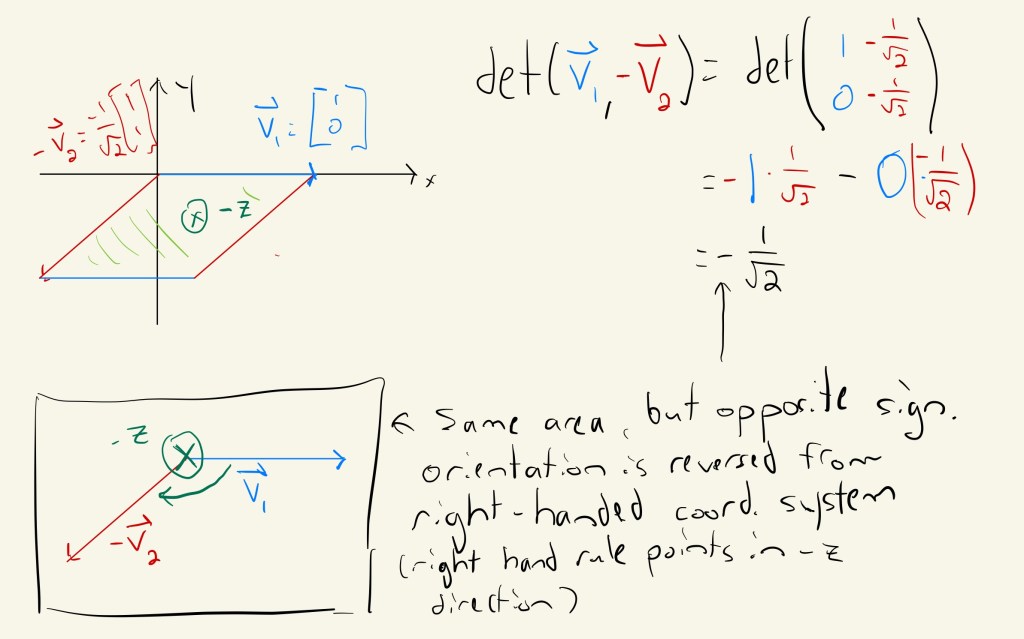

But what does this mean geometrically? The sign detects the orientation of the volume: if it agrees with the right-handed coordinate system it lives in, then it is

, and if it points in the opposite direction, then it is

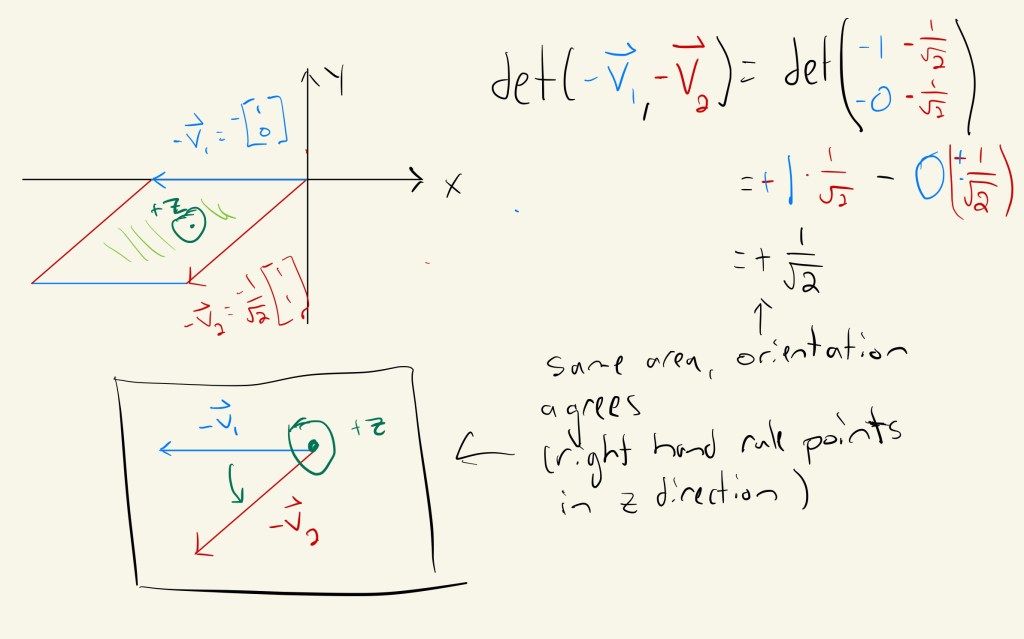

. As with much geometry, this will be much more clear in a nice picture in 2D. Here, we can define orientation as “where the right hand rule applied to our input vectors points” (we’re basically doing a cross product of our inputs). Look at what happens when we flip our input vectors!

So the antisymmetry property told us how orientation changes when we swap inputs: if we swap any two inputs, the volume stays the same, but the sign encoding orientation flips!

A warning–this actually doesn’t make any sense for higher dimensional volumes! The cross product is special and really only works in dimension 3 with vectors as input. In fact, mathematicians use the determinant itself to define orientation for more general volumes: first, take a “standard” ordered set of vectors, like the standard basis vectors and define orientation by checking the sign of the determinant of the matrix mapping to a new coordinate system

. If the sign is

, the orientations agree, and if the sign is

, they disagree. This basic idea even allows us to generalize to shapes that aren’t just flat space called manifolds. These are fundamental objects to the world around us, and they’re absolutely fascinating. You’ve been working with circles and spheres your whole life, and I’m sure you’ve run into the torus the last time you craved a glazed donut. You’ve probably even run into a few möbius bands too. Some of these manifolds, like the sphere or the torus, have meaningful orientations, meaning you can define an “inside” and “outside”, and others, like the mobius band, do not. The wiki for the möbius band has some delightful animations explaining why they cannot be oriented. But ultimately, the reason they cannot comes down to definitions to construct manifolds and properties of the determinant. If you want to learn more about this sort of thing, check out differential geometry–it’s a beautiful field of mathematics.

Wrap up

We’ve finally unpacked the loaded statement from last post: the determinant is an alternating -tensor

from

copies of a vector space to the base field

, usually

. The tensor part tells us the determinant is multilinear and so scales linearly as we scalar multiply and add in each separate component. The alternating part tells us that it’s an antisymmetric function, so when we swap two inputs, we should multiply by

. Geometrically, the tensor part captures how volume scales when we scale individual sides of a hyperparallelepiped (just a parallelogram in

dimensions), and the alternating part tells us whether its orientation agrees (

) or disagrees (

) with the right-handed coordinate system it lives in. Along the way, we ran into another fascinating tensor–the special case of a real inner product, which has our familiar dot product as an even more special case. This tensor was symmetric, and so when we swapped inputs, the output was unchanged.

It’s worth mentioning that the symmetric and antisymmetric properties are very deep. Like, the fundamental particles of the universe deep. For instance, symmetry and antisymmetry allow us to distinguish bosons, like the light particles photons, from fermions, like electrons: the wavefunctions for bosons are symmetric, and the wavefunctions for fermions are antisymmetric. This seemingly innocent distinction has profound physical consequences like the Pauli exclusion principle, which forces electrons to live in different states. This in turn is essential for all of chemistry and life as we know it–that electrons cannot occupy the same state forces them to arrange in special configurations within atoms, and these electron configurations ultimately govern the chemical properties of matter. If that wasn’t enough, other flavors of symmetry, like rotation symmetry and charge symmetry, are central to the standard model of physics, which classifies all fundamental particles of the universe that we understand. But that’s another story.

Food: Mother’s day eggs benedict, with a twist: chipotle-lime hollandaise with garlic, coriander, turmeric, and mustard, sous vide eggs, asparagus, bacon, and some darn good english muffins made by Grandma. Yes, I am a basic brunch boi.

Leave a comment