Prereqs: an intro linear algebra course

The classic example of a partial ordering in any intro-to-proofs class is that of set inclusion: stuff like for sets

. But I wanted to share my favorite partial ordering, one that’s fabulously useful in fields like quantum information theory and control theory: the partial ordering of positive matrices.

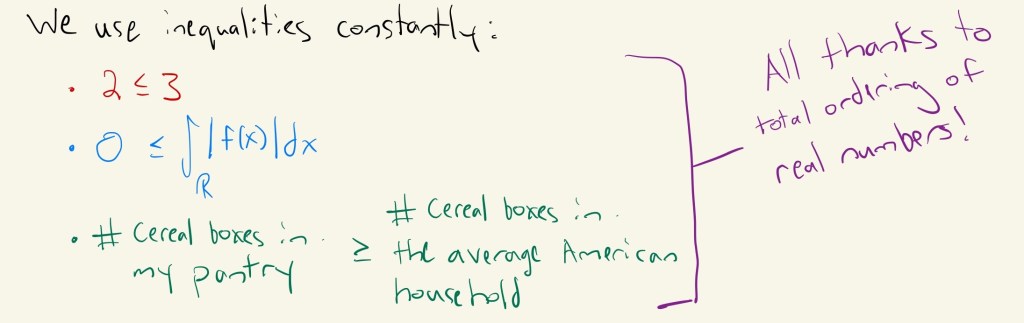

Here’s the rough idea. We use the total orderedness of real numbers in mathematics and life constantly, in the form of inequalities. Whenever you want to compare the size or quantity of two things, you need order!

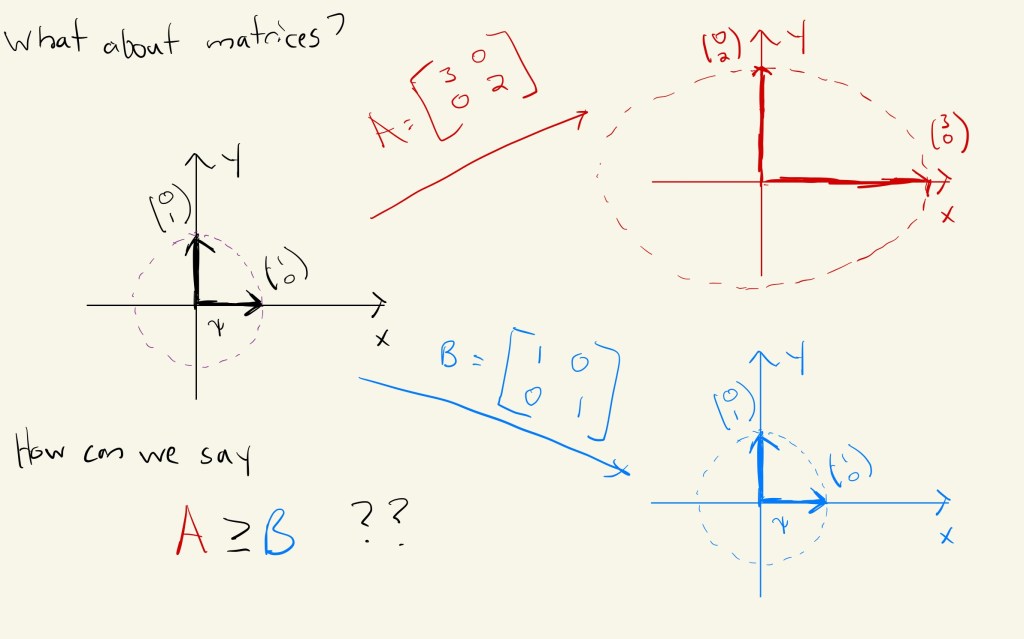

What if we wanted to compare the “size” of two square matrices? Is there a way we can meaningfully do this? Are these leading questions for the rest of the article??

Eigenvalues and Warping Circles to Ellipses

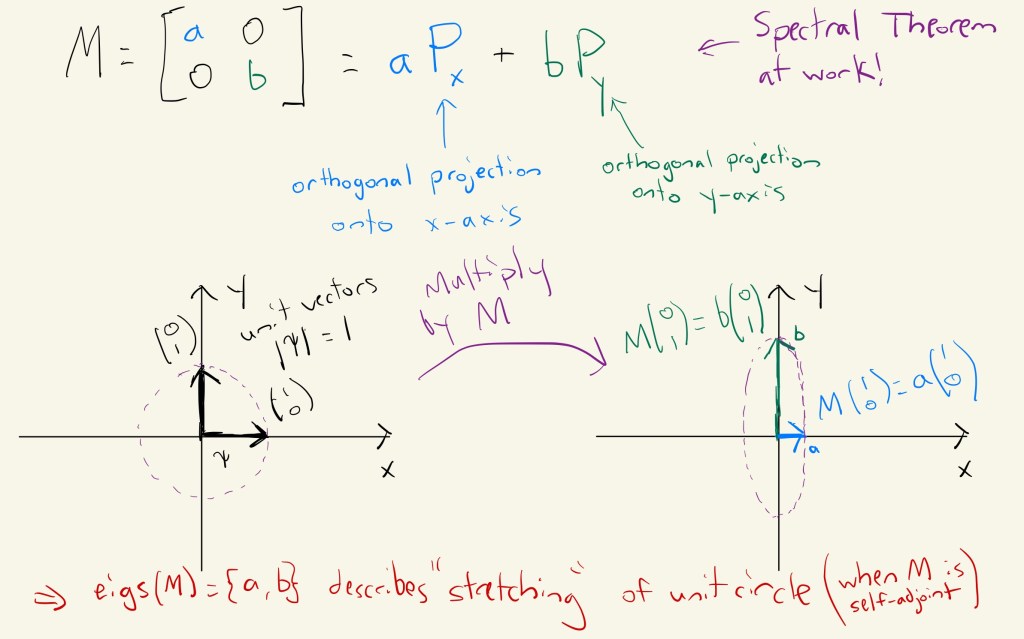

In linear algebra, you may have been exposed to the all-important spectral theorem, which gives conditions for a matrix to be diagonalizable. One key class of diagonalizable matrices, and the only class we’ll care about today, is the set of self-adjoint matrices, i.e. . Diagonalization decomposes a matrix (which you’ll recall encodes a linear map between finite dimensional vector spaces) into a sum of scaled orthogonal projections

, where the scaling is done by eigenvalues

(for a refresher, check out 3blue1brown’s wonderful video). Since self-adjoint matrices

have real eigenvalues, this gives us some very nice geometry to play with:

maps the unit circle to an ellipse, whose size is decided by the eigenvalues of

!

Now, let’s think about how we might compare the “size” of two self-adjoint matrices . Well, maybe this “image of the unit circle” business can give us an idea…

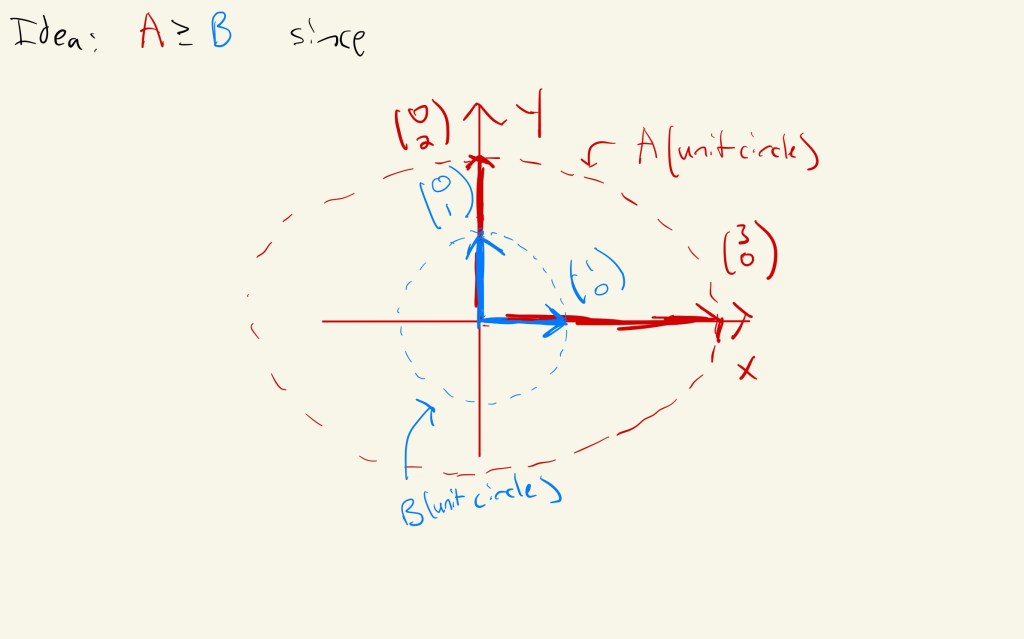

Huh…the images of the unit circle under these two maps are different sized ellipses. What if we compared them?

Hey! We can completely fit the image (unit circle) inside

(unit circle)! This seems like a good candidate idea for

.

Positivity and the Partial Order

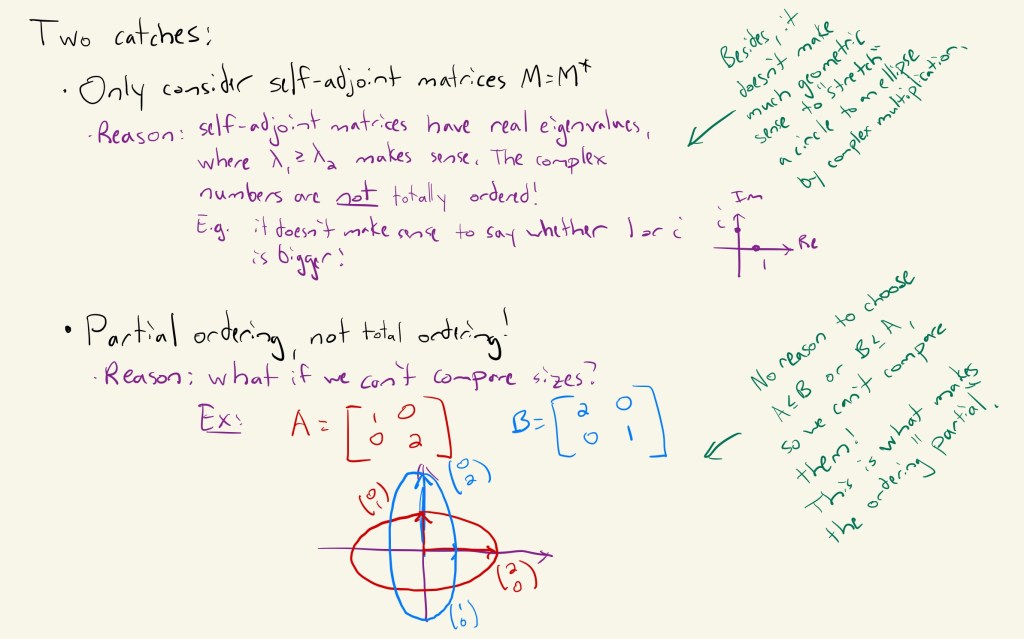

So…how do we turn this idea into something a bit more concrete? Well, we have to address two issues: the admissible class of matrices (just diagonalizable is too broad and loses geometry), and we need a looser definition of order than the strict “total order” of the reals (matrices can grow in many “different directions”, and we may not be able to compare two arbitrary matrices).

With these caveats in mind, we’re well equipped to formally define this partial ordering.

Now, be warned that there are many equivalent definitions of this partial ordering (and even different names–many authors prefer “positive semidefinite” to mean “nonnegative” , and “positive definite” to mean “positive”

). Generally the key to demonstrating equivalence is using the spectral theorem and some inner product shenanigans, but this certainly is a fine definition. In fact, it lends itself nicely to a class of more general spaces known as C*-algebras, whose theory allows us to analytically handle more exotic beasts like bounded operators on infinite dimensional Hilbert spaces. Crucially, operators in these spaces still have a notion of spectrum, which extends from the familiar eigenvalues to handle other flavors of “solutions to

“–it’s exactly this spectrum that gives us the notion of energy levels in quantum mechanics!

Epilogue

As always in mathematics, there’s much more to say. The first powerful application of this notion of positivity is that it allows us to define square roots of positive matrices–and as anybody who’s seen a quadratic equation knows, square roots are central tools in nearly every field of mathematics. Further, this definition gives convenient access to functional calculus on matrices for a variety of useful functions with restricted domains, meaning you can meaningfully write for a matrix

and a real function

(it’s not the only way, but it’s definitely easier). One of the most important examples is the function

, which after taking the trace of this function, we recover von Neumann entropy, a crucial measurement of the quantum information stored in a quantum state

.

In a different direction, this partial ordering bears a great deal of fruit in the worlds of optimization and differential equations, where knowledge of the sign of your eigenvalues has huge ramifications for knowing e.g. whether a critical point is a maximum, minimum, or neither, which in turn has consequences for the stability of dynamical systems.

I’ll finish with two quotes, the first from my advisor Bruno Nachtergaele, and the second from the wikipedia entry for Linear Algebra: “Linear algebra is all about diagonalization”, and “Linear algebra is central to almost all areas of mathematics”. A quick application of the transitive property should tell you “learn as much about diagonalization and eigenstuff as you possibly can”. See you next time!

Food: Dumplings! Made from leftover smoked pork with the classic ginger-garlic-soy seasoning, and topped with what I have taken to calling “magic sauce”–sweet aromatic soy sauce, chili oil, Chinkiang vinegar, and garlic, courtesy of Fuschia Dunlops lovely book “Food of Sichuan”. Shoutouts to my sister for making the dumpling skins from scratch…and huge respect for anybody who does the same, it’s an arduous task.

You mention the well-orderedness of real numbers, but the usual total order on the reals is not a well-order. Of course, by AC, there is a well-ordering of the reals, but it’s not the standard one. I think you meant to mention the total order on the reals.

LikeLike

You are absolutely right–I conflated the terms “well order” and “total order” (heh…it’s been a while since I took intro analysis). Thanks for reminding me, and I’ve accordingly corrected this post.

LikeLike